test

commit

152c814126

|

|

@ -45,17 +45,29 @@ jobs:

|

|||

Compile-check:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v1

|

||||

- uses: actions/checkout@v2

|

||||

# In the checkout@v2, it doesn't support git submodule. Execute the commands manually.

|

||||

- name: checkout submodules

|

||||

shell: bash

|

||||

run: |

|

||||

git submodule sync --recursive

|

||||

git -c protocol.version=2 submodule update --init --force --recursive --depth=1

|

||||

- name: Set up JDK 1.8

|

||||

uses: actions/setup-java@v1

|

||||

with:

|

||||

java-version: 1.8

|

||||

- name: Compile

|

||||

run: mvn -U -B -T 1C clean install -Prelease -Dmaven.compile.fork=true -Dmaven.test.skip=true

|

||||

run: mvn -B clean compile package -Prelease -Dmaven.test.skip=true

|

||||

License-check:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v1

|

||||

- uses: actions/checkout@v2

|

||||

# In the checkout@v2, it doesn't support git submodule. Execute the commands manually.

|

||||

- name: checkout submodules

|

||||

shell: bash

|

||||

run: |

|

||||

git submodule sync --recursive

|

||||

git -c protocol.version=2 submodule update --init --force --recursive --depth=1

|

||||

- name: Set up JDK 1.8

|

||||

uses: actions/setup-java@v1

|

||||

with:

|

||||

|

|

|

|||

|

|

@ -0,0 +1,75 @@

|

|||

#

|

||||

# Licensed to the Apache Software Foundation (ASF) under one or more

|

||||

# contributor license agreements. See the NOTICE file distributed with

|

||||

# this work for additional information regarding copyright ownership.

|

||||

# The ASF licenses this file to You under the Apache License, Version 2.0

|

||||

# (the "License"); you may not use this file except in compliance with

|

||||

# the License. You may obtain a copy of the License at

|

||||

#

|

||||

# http://www.apache.org/licenses/LICENSE-2.0

|

||||

#

|

||||

# Unless required by applicable law or agreed to in writing, software

|

||||

# distributed under the License is distributed on an "AS IS" BASIS,

|

||||

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

# See the License for the specific language governing permissions and

|

||||

# limitations under the License.

|

||||

#

|

||||

|

||||

on: ["pull_request"]

|

||||

env:

|

||||

DOCKER_DIR: ./docker

|

||||

LOG_DIR: /tmp/dolphinscheduler

|

||||

|

||||

name: e2e Test

|

||||

|

||||

jobs:

|

||||

|

||||

build:

|

||||

name: Test

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

|

||||

- uses: actions/checkout@v2

|

||||

# In the checkout@v2, it doesn't support git submodule. Execute the commands manually.

|

||||

- name: checkout submodules

|

||||

shell: bash

|

||||

run: |

|

||||

git submodule sync --recursive

|

||||

git -c protocol.version=2 submodule update --init --force --recursive --depth=1

|

||||

- uses: actions/cache@v1

|

||||

with:

|

||||

path: ~/.m2/repository

|

||||

key: ${{ runner.os }}-maven-${{ hashFiles('**/pom.xml') }}

|

||||

restore-keys: |

|

||||

${{ runner.os }}-maven-

|

||||

- name: Build Image

|

||||

run: |

|

||||

export VERSION=`cat $(pwd)/pom.xml| grep "SNAPSHOT</version>" | awk -F "-SNAPSHOT" '{print $1}' | awk -F ">" '{print $2}'`

|

||||

sh ./dockerfile/hooks/build

|

||||

- name: Docker Run

|

||||

run: |

|

||||

VERSION=`cat $(pwd)/pom.xml| grep "SNAPSHOT</version>" | awk -F "-SNAPSHOT" '{print $1}' | awk -F ">" '{print $2}'`

|

||||

mkdir -p /tmp/logs

|

||||

docker run -dit -e POSTGRESQL_USERNAME=test -e POSTGRESQL_PASSWORD=test -v /tmp/logs:/opt/dolphinscheduler/logs -p 8888:8888 dolphinscheduler:$VERSION all

|

||||

- name: Check Server Status

|

||||

run: sh ./dockerfile/hooks/check

|

||||

- name: Prepare e2e env

|

||||

run: |

|

||||

sudo apt-get install -y libxss1 libappindicator1 libindicator7 xvfb unzip

|

||||

wget https://dl.google.com/linux/direct/google-chrome-stable_current_amd64.deb

|

||||

sudo dpkg -i google-chrome*.deb

|

||||

sudo apt-get install -f -y

|

||||

wget -N https://chromedriver.storage.googleapis.com/80.0.3987.106/chromedriver_linux64.zip

|

||||

unzip chromedriver_linux64.zip

|

||||

sudo mv -f chromedriver /usr/local/share/chromedriver

|

||||

sudo ln -s /usr/local/share/chromedriver /usr/local/bin/chromedriver

|

||||

- name: Run e2e Test

|

||||

run: cd ./e2e && mvn -B clean test

|

||||

- name: Collect logs

|

||||

if: failure()

|

||||

uses: actions/upload-artifact@v1

|

||||

with:

|

||||

name: dslogs

|

||||

path: /tmp/logs

|

||||

|

||||

|

||||

|

|

@ -34,7 +34,13 @@ jobs:

|

|||

matrix:

|

||||

os: [ubuntu-latest, macos-latest]

|

||||

steps:

|

||||

- uses: actions/checkout@v1

|

||||

- uses: actions/checkout@v2

|

||||

# In the checkout@v2, it doesn't support git submodule. Execute the commands manually.

|

||||

- name: checkout submodules

|

||||

shell: bash

|

||||

run: |

|

||||

git submodule sync --recursive

|

||||

git -c protocol.version=2 submodule update --init --force --recursive --depth=1

|

||||

- name: Set up Node.js

|

||||

uses: actions/setup-node@v1

|

||||

with:

|

||||

|

|

@ -49,7 +55,13 @@ jobs:

|

|||

License-check:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v1

|

||||

- uses: actions/checkout@v2

|

||||

# In the checkout@v2, it doesn't support git submodule. Execute the commands manually.

|

||||

- name: checkout submodules

|

||||

shell: bash

|

||||

run: |

|

||||

git submodule sync --recursive

|

||||

git -c protocol.version=2 submodule update --init --force --recursive --depth=1

|

||||

- name: Set up JDK 1.8

|

||||

uses: actions/setup-java@v1

|

||||

with:

|

||||

|

|

|

|||

|

|

@ -20,7 +20,6 @@ on:

|

|||

push:

|

||||

branches:

|

||||

- dev

|

||||

- refactor-worker

|

||||

env:

|

||||

DOCKER_DIR: ./docker

|

||||

LOG_DIR: /tmp/dolphinscheduler

|

||||

|

|

@ -84,4 +83,4 @@ jobs:

|

|||

mkdir -p ${LOG_DIR}

|

||||

cd ${DOCKER_DIR}

|

||||

docker-compose logs db > ${LOG_DIR}/db.txt

|

||||

continue-on-error: true

|

||||

continue-on-error: true

|

||||

110

CONTRIBUTING.md

110

CONTRIBUTING.md

|

|

@ -1,35 +1,53 @@

|

|||

* First from the remote repository *https://github.com/apache/incubator-dolphinscheduler.git* fork code to your own repository

|

||||

|

||||

* there are three branches in the remote repository currently:

|

||||

* master normal delivery branch

|

||||

After the stable version is released, the code for the stable version branch is merged into the master branch.

|

||||

# Development

|

||||

|

||||

* dev daily development branch

|

||||

The daily development branch, the newly submitted code can pull requests to this branch.

|

||||

Start by forking the dolphinscheduler GitHub repository, make changes in a branch and then send a pull request.

|

||||

|

||||

## Set up your dolphinscheduler GitHub Repository

|

||||

|

||||

* Clone your own warehouse to your local

|

||||

There are three branches in the remote repository currently:

|

||||

- `master` : normal delivery branch. After the stable version is released, the code for the stable version branch is merged into the master branch.

|

||||

|

||||

- `dev` : daily development branch. The daily development branch, the newly submitted code can pull requests to this branch.

|

||||

|

||||

- `x.x.x-release` : the stable release version.

|

||||

|

||||

`git clone https://github.com/apache/incubator-dolphinscheduler.git`

|

||||

So, you should fork the `dev` branch.

|

||||

|

||||

* Add remote repository address, named upstream

|

||||

|

||||

`git remote add upstream https://github.com/apache/incubator-dolphinscheduler.git`

|

||||

|

||||

* View repository:

|

||||

|

||||

`git remote -v`

|

||||

|

||||

> There will be two repositories at this time: origin (your own warehouse) and upstream (remote repository)

|

||||

|

||||

* Get/update remote repository code (already the latest code, skip it)

|

||||

|

||||

`git fetch upstream`

|

||||

|

||||

|

||||

* Synchronize remote repository code to local repository

|

||||

After forking the [dolphinscheduler upstream source repository](https://github.com/apache/incubator-dolphinscheduler/fork) to your personal repository, you can set your personal development environment.

|

||||

|

||||

```sh

|

||||

$ cd <your work direcotry>

|

||||

$ git clone < your personal forked dolphinscheduler repo>

|

||||

$ cd incubator-dolphinscheduler

|

||||

```

|

||||

|

||||

## Set git remote as ``upstream``

|

||||

|

||||

Add remote repository address, named upstream

|

||||

|

||||

```sh

|

||||

git remote add upstream https://github.com/apache/incubator-dolphinscheduler.git

|

||||

```

|

||||

|

||||

View repository:

|

||||

|

||||

```sh

|

||||

git remote -v

|

||||

```

|

||||

|

||||

There will be two repositories at this time: origin (your own warehouse) and upstream (remote repository)

|

||||

|

||||

Get/update remote repository code (already the latest code, skip it).

|

||||

|

||||

|

||||

```sh

|

||||

git fetch upstream

|

||||

```

|

||||

|

||||

Synchronize remote repository code to local repository

|

||||

|

||||

```sh

|

||||

git checkout origin/dev

|

||||

git merge --no-ff upstream/dev

|

||||

```

|

||||

|

|

@ -41,24 +59,46 @@ git checkout -b dev-1.0 upstream/dev-1.0

|

|||

git push --set-upstream origin dev1.0

|

||||

```

|

||||

|

||||

* After modifying the code locally, submit it to your own repository:

|

||||

## Create your feature branch

|

||||

Before making code changes, make sure you create a separate branch for them.

|

||||

|

||||

`git commit -m 'test commit'`

|

||||

`git push`

|

||||

```sh

|

||||

$ git checkout -b <your-feature>

|

||||

```

|

||||

|

||||

* Submit changes to the remote repository

|

||||

## Commit changes

|

||||

After modifying the code locally, submit it to your own repository:

|

||||

|

||||

```sh

|

||||

|

||||

git commit -m 'information about your feature'

|

||||

```

|

||||

|

||||

## Push to the branch

|

||||

|

||||

|

||||

Push your locally committed changes to the remote origin (your fork).

|

||||

|

||||

```

|

||||

$ git push origin <your-feature>

|

||||

```

|

||||

|

||||

## Create a pull request

|

||||

|

||||

After submitting changes to your remote repository, you should click on the new pull request On the following github page.

|

||||

|

||||

* On the github page, click on the new pull request.

|

||||

<p align = "center">

|

||||

<img src = "http://geek.analysys.cn/static/upload/221/2019-04-02/90f3abbf-70ef-4334-b8d6-9014c9cf4c7f.png"width ="60%"/>

|

||||

</ p>

|

||||

<img src = "http://geek.analysys.cn/static/upload/221/2019-04-02/90f3abbf-70ef-4334-b8d6-9014c9cf4c7f.png" width ="60%"/>

|

||||

</p>

|

||||

|

||||

|

||||

Select the modified local branch and the branch to merge past to create a pull request.

|

||||

|

||||

* Select the modified local branch and the branch to merge past to create a pull request.

|

||||

<p align = "center">

|

||||

<img src = "http://geek.analysys.cn/static/upload/221/2019-04-02/fe7eecfe-2720-4736-951b-b3387cf1ae41.png"width ="60%"/>

|

||||

</ p>

|

||||

<img src = "http://geek.analysys.cn/static/upload/221/2019-04-02/fe7eecfe-2720-4736-951b-b3387cf1ae41.png" width ="60%"/>

|

||||

</p>

|

||||

|

||||

* Next, the administrator is responsible for **merging** to complete the pull request

|

||||

Next, the administrator is responsible for **merging** to complete the pull request.

|

||||

|

||||

|

||||

|

||||

|

|

|

|||

28

README.md

28

README.md

|

|

@ -17,7 +17,7 @@ Dolphin Scheduler Official Website

|

|||

|

||||

### Design features:

|

||||

|

||||

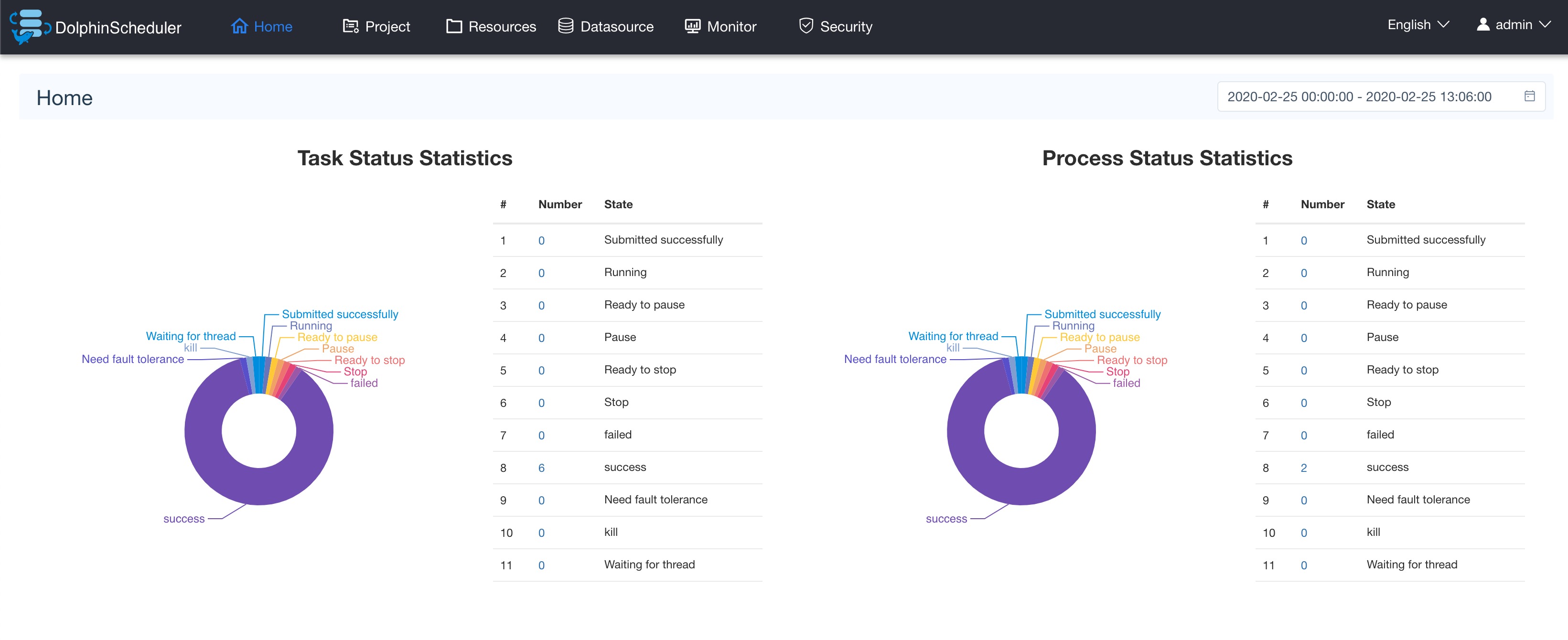

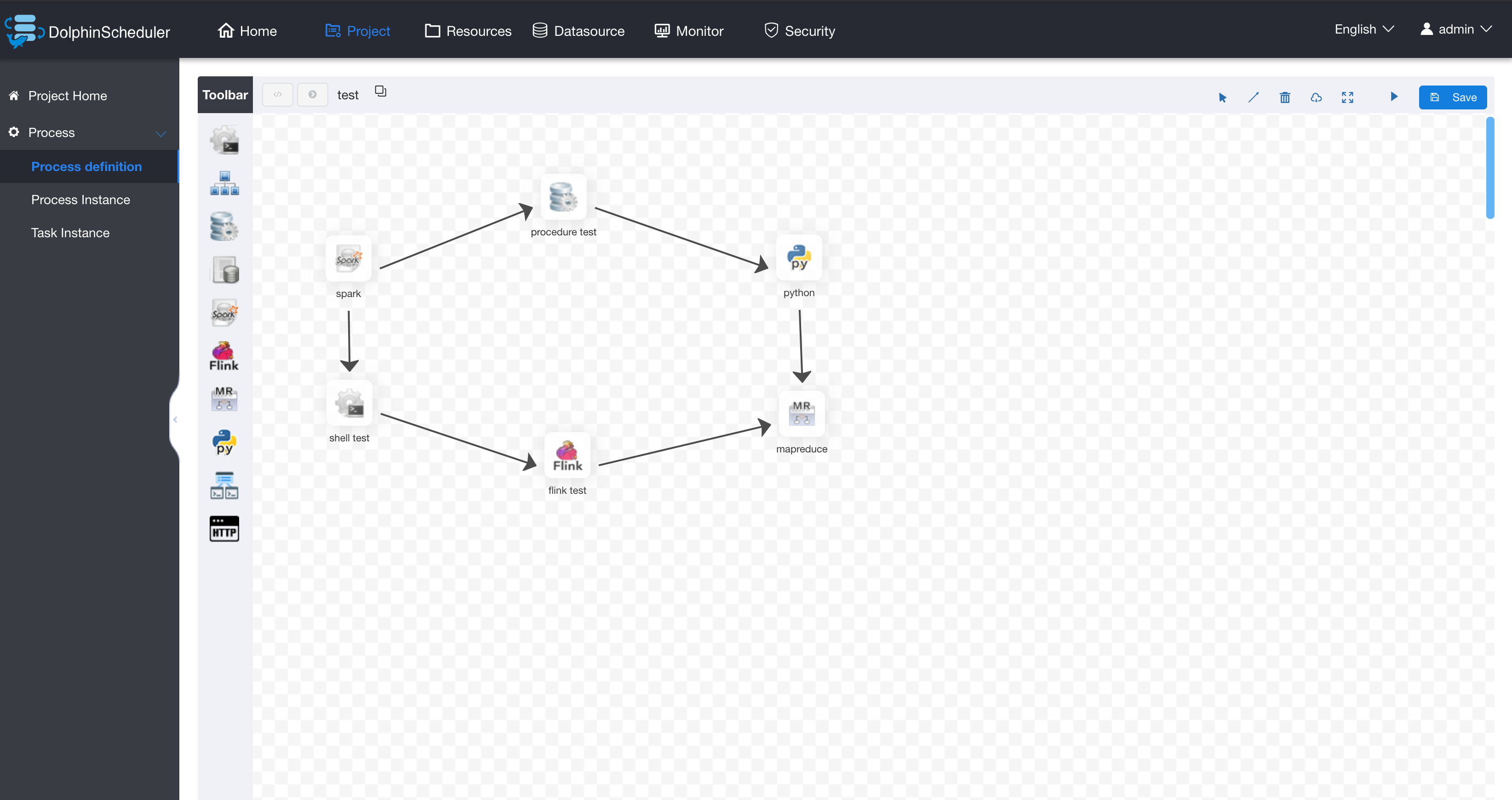

A distributed and easy-to-expand visual DAG workflow scheduling system. Dedicated to solving the complex dependencies in data processing, making the scheduling system `out of the box` for data processing.

|

||||

A distributed and easy-to-extend visual DAG workflow scheduling system. Dedicated to solving the complex dependencies in data processing, making the scheduling system `out of the box` for data processing.

|

||||

Its main objectives are as follows:

|

||||

|

||||

- Associate the Tasks according to the dependencies of the tasks in a DAG graph, which can visualize the running state of task in real time.

|

||||

|

|

@ -45,17 +45,16 @@ HA is supported by itself | All process definition operations are visualized, dr

|

|||

Overload processing: Task queue mechanism, the number of schedulable tasks on a single machine can be flexibly configured, when too many tasks will be cached in the task queue, will not cause machine jam. | One-click deployment | Supports traditional shell tasks, and also support big data platform task scheduling: MR, Spark, SQL (mysql, postgresql, hive, sparksql), Python, Procedure, Sub_Process | |

|

||||

|

||||

|

||||

|

||||

|

||||

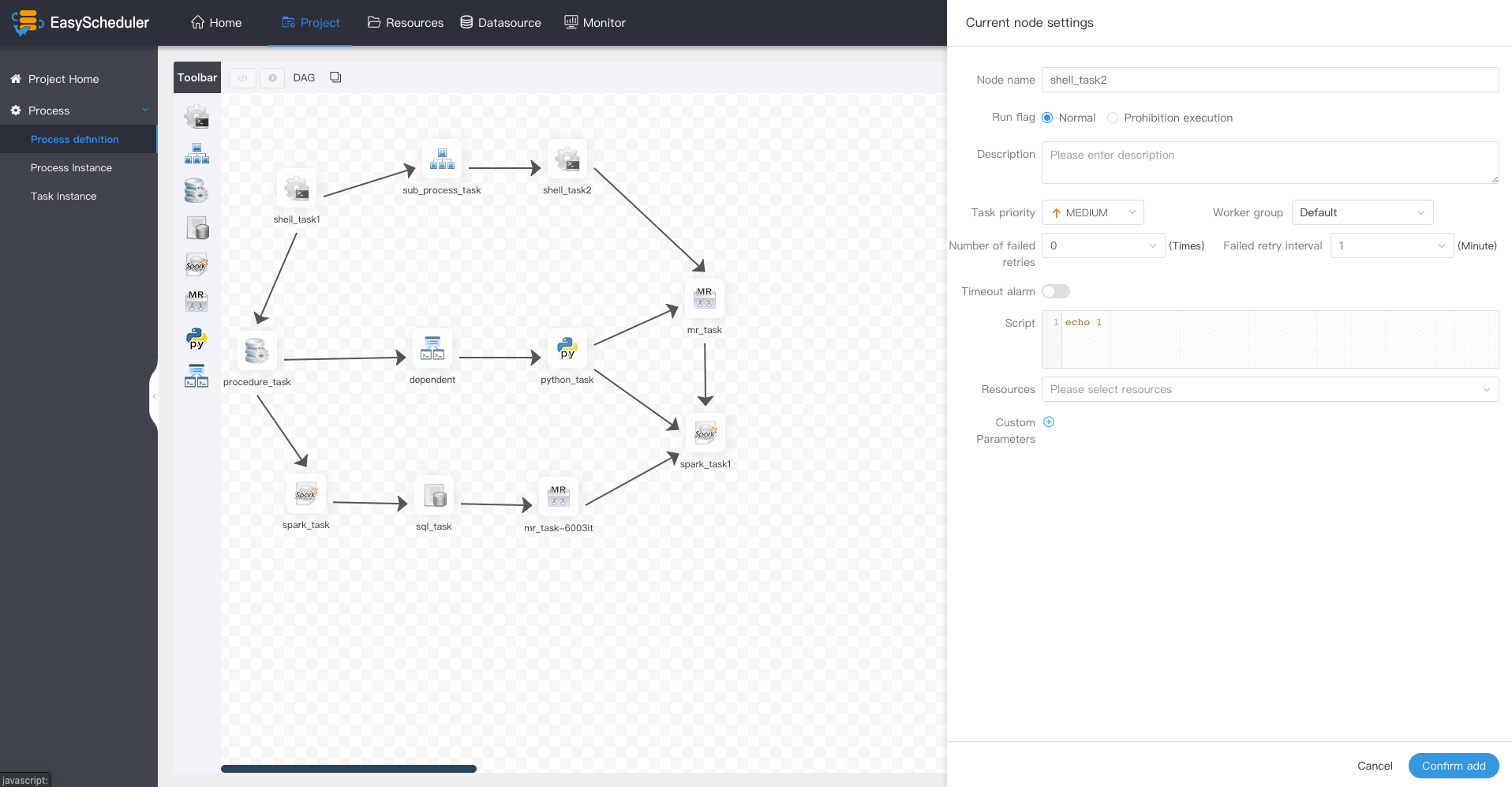

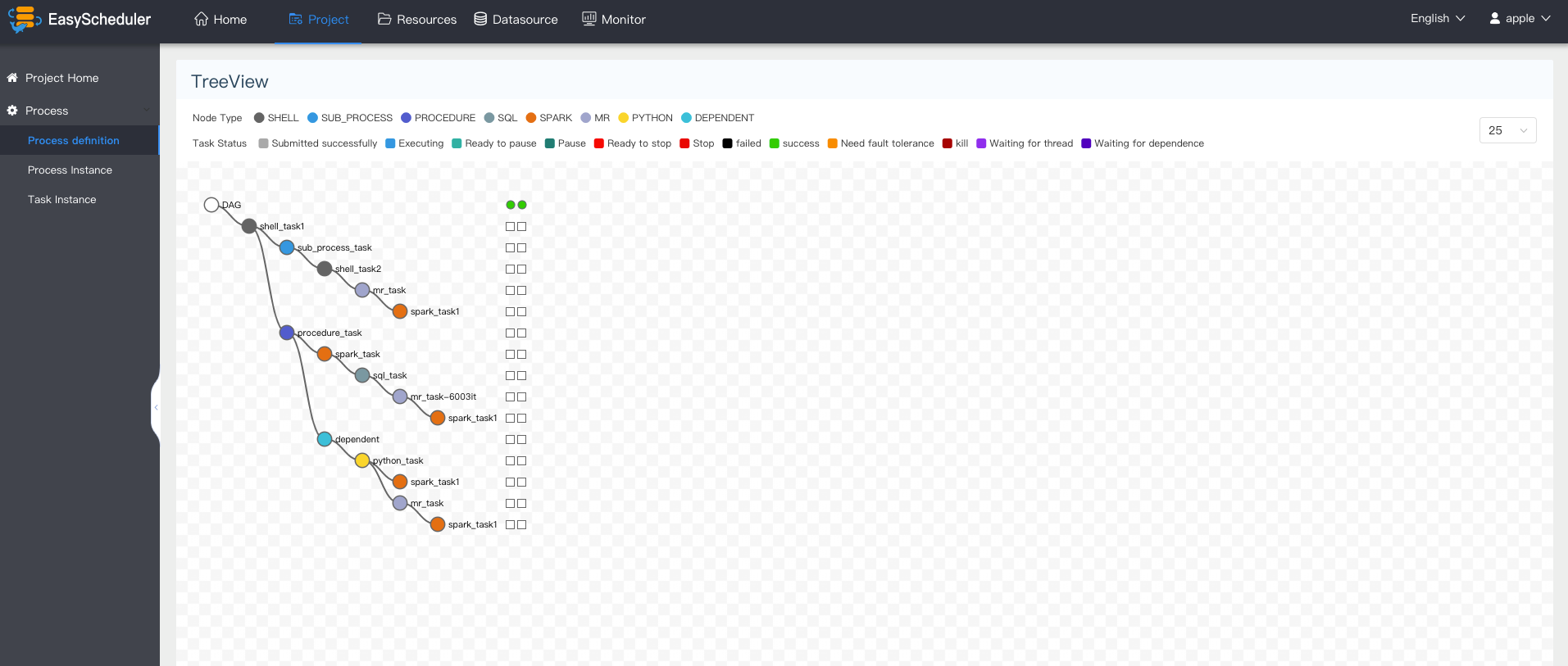

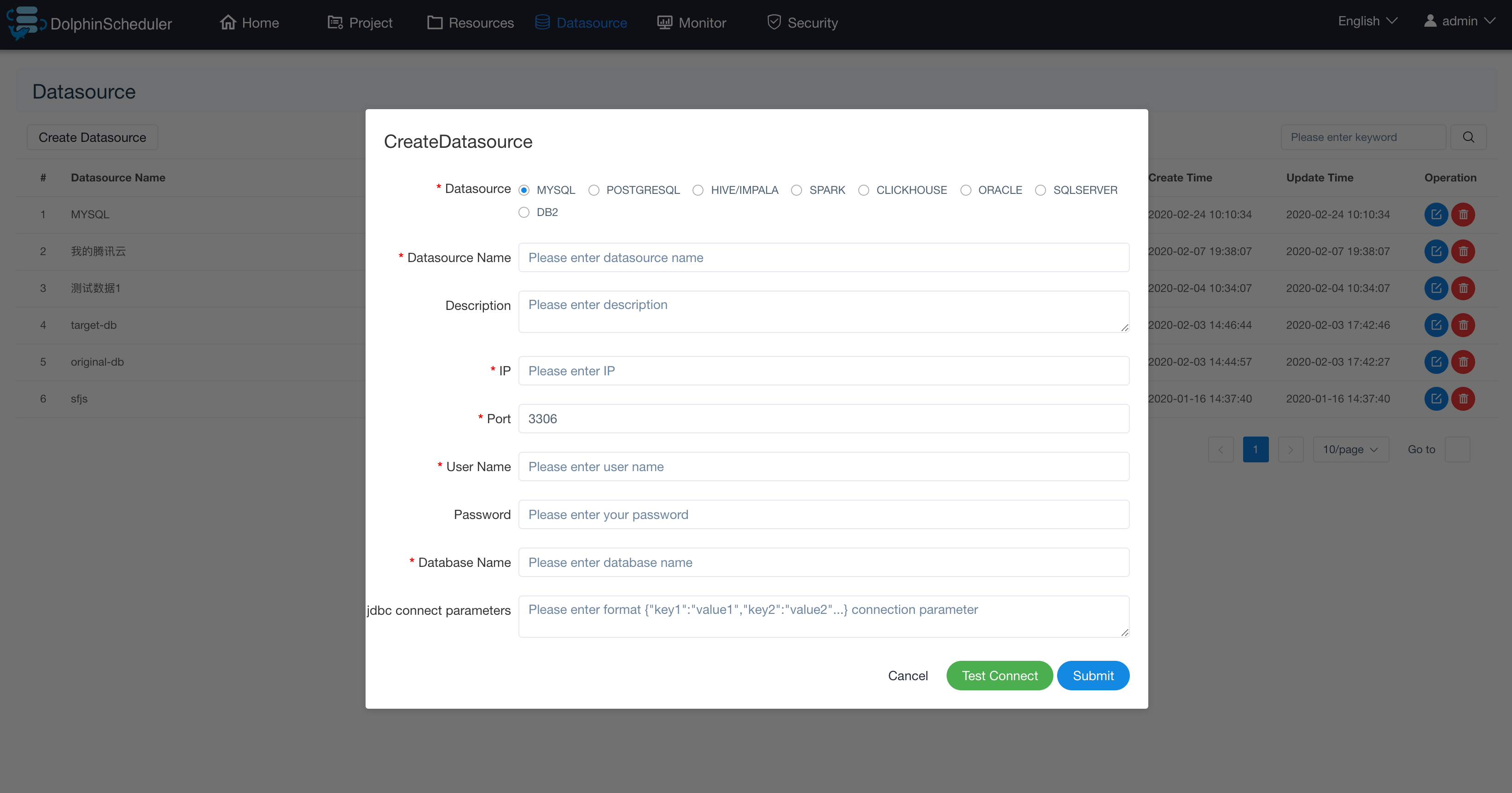

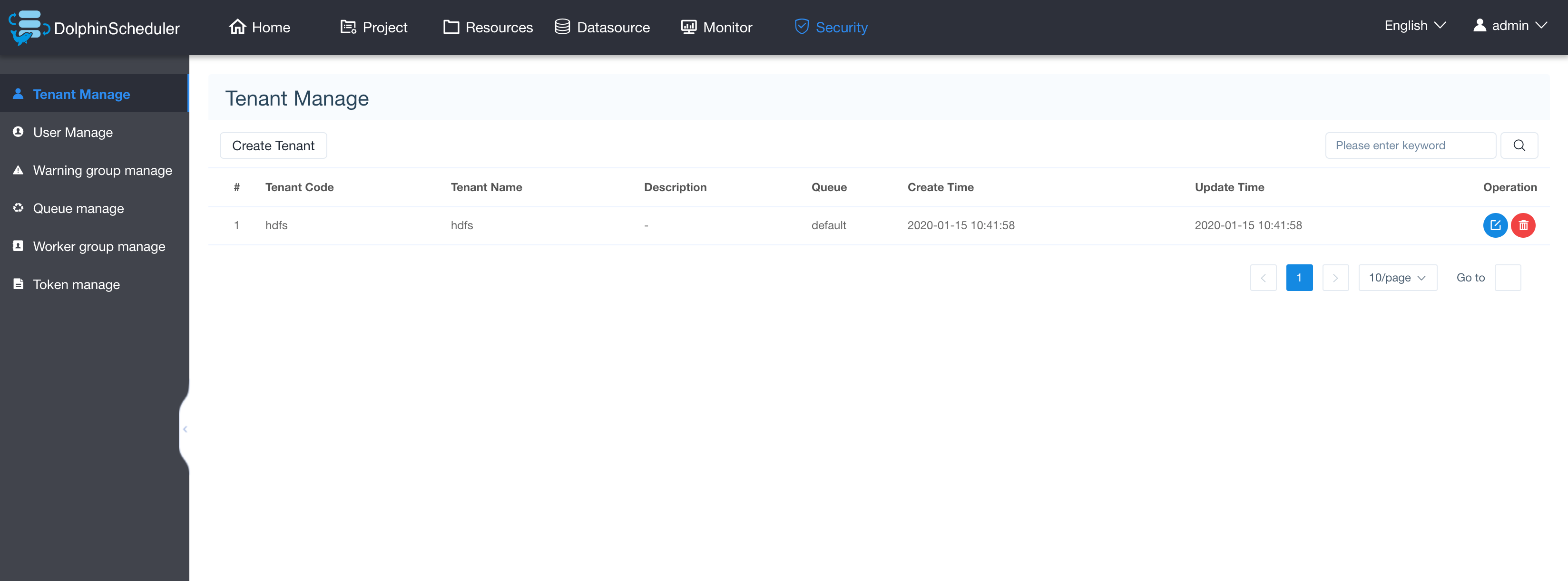

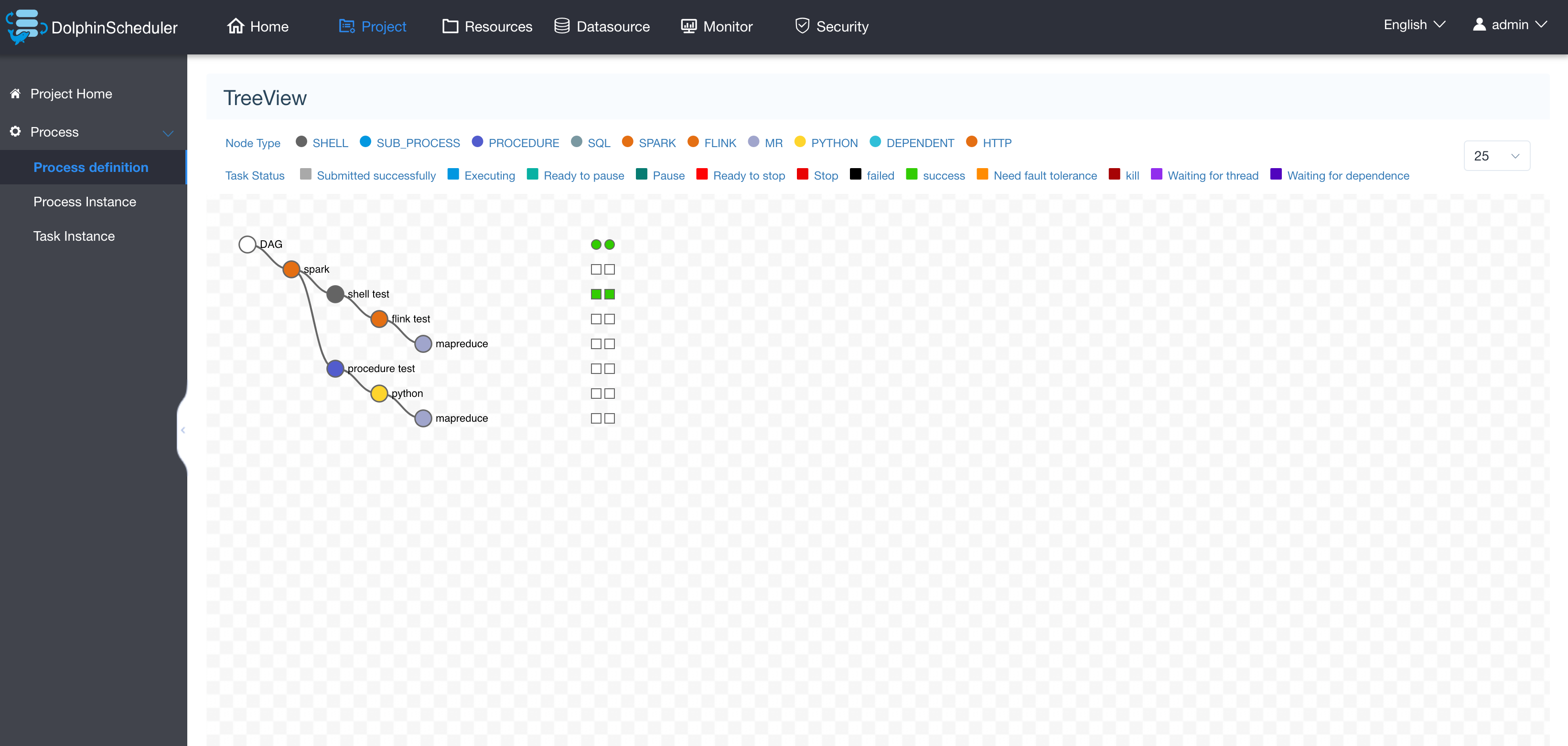

### System partial screenshot

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

### Document

|

||||

|

||||

- <a href="https://dolphinscheduler.apache.org/en-us/docs/1.2.0/user_doc/backend-deployment.html" target="_blank">Backend deployment documentation</a>

|

||||

|

|

@ -100,16 +99,9 @@ It is because of the shoulders of these open source projects that the birth of t

|

|||

### Get Help

|

||||

1. Submit an issue

|

||||

1. Subscribe the mail list : https://dolphinscheduler.apache.org/en-us/docs/development/subscribe.html. then send mail to dev@dolphinscheduler.apache.org

|

||||

1. Contact WeChat group manager, ID 510570367. This is for Mandarin(CN) discussion.

|

||||

1. Contact WeChat(dailidong66). This is just for Mandarin(CN) discussion.

|

||||

|

||||

### License

|

||||

Please refer to [LICENSE](https://github.com/apache/incubator-dolphinscheduler/blob/dev/LICENSE) file.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

|

|

|||

|

|

@ -36,11 +36,19 @@ Dolphin Scheduler Official Website

|

|||

|

||||

### 系统部分截图

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

### 文档

|

||||

|

||||

|

|

|

|||

|

|

@ -0,0 +1,158 @@

|

|||

{

|

||||

"DOLPHIN": {

|

||||

"service": [],

|

||||

"DOLPHIN_API": [

|

||||

{

|

||||

"name": "dolphin_api_port_check",

|

||||

"label": "dolphin_api_port_check",

|

||||

"description": "dolphin_api_port_check.",

|

||||

"interval": 10,

|

||||

"scope": "ANY",

|

||||

"source": {

|

||||

"type": "PORT",

|

||||

"uri": "{{dolphin-application-api/server.port}}",

|

||||

"default_port": 12345,

|

||||

"reporting": {

|

||||

"ok": {

|

||||

"text": "TCP OK - {0:.3f}s response on port {1}"

|

||||

},

|

||||

"warning": {

|

||||

"text": "TCP OK - {0:.3f}s response on port {1}",

|

||||

"value": 1.5

|

||||

},

|

||||

"critical": {

|

||||

"text": "Connection failed: {0} to {1}:{2}",

|

||||

"value": 5.0

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

],

|

||||

"DOLPHIN_LOGGER": [

|

||||

{

|

||||

"name": "dolphin_logger_port_check",

|

||||

"label": "dolphin_logger_port_check",

|

||||

"description": "dolphin_logger_port_check.",

|

||||

"interval": 10,

|

||||

"scope": "ANY",

|

||||

"source": {

|

||||

"type": "PORT",

|

||||

"uri": "{{dolphin-common/loggerserver.rpc.port}}",

|

||||

"default_port": 50051,

|

||||

"reporting": {

|

||||

"ok": {

|

||||

"text": "TCP OK - {0:.3f}s response on port {1}"

|

||||

},

|

||||

"warning": {

|

||||

"text": "TCP OK - {0:.3f}s response on port {1}",

|

||||

"value": 1.5

|

||||

},

|

||||

"critical": {

|

||||

"text": "Connection failed: {0} to {1}:{2}",

|

||||

"value": 5.0

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

],

|

||||

"DOLPHIN_MASTER": [

|

||||

{

|

||||

"name": "DOLPHIN_MASTER_CHECK",

|

||||

"label": "check dolphin scheduler master status",

|

||||

"description": "",

|

||||

"interval":10,

|

||||

"scope": "HOST",

|

||||

"enabled": true,

|

||||

"source": {

|

||||

"type": "SCRIPT",

|

||||

"path": "DOLPHIN/1.2.1/package/alerts/alert_dolphin_scheduler_status.py",

|

||||

"parameters": [

|

||||

|

||||

{

|

||||

"name": "connection.timeout",

|

||||

"display_name": "Connection Timeout",

|

||||

"value": 5.0,

|

||||

"type": "NUMERIC",

|

||||

"description": "The maximum time before this alert is considered to be CRITICAL",

|

||||

"units": "seconds",

|

||||

"threshold": "CRITICAL"

|

||||

},

|

||||

{

|

||||

"name": "alertName",

|

||||

"display_name": "alertName",

|

||||

"value": "DOLPHIN_MASTER",

|

||||

"type": "STRING",

|

||||

"description": "alert name"

|

||||

}

|

||||

]

|

||||

}

|

||||

}

|

||||

],

|

||||

"DOLPHIN_WORKER": [

|

||||

{

|

||||

"name": "DOLPHIN_WORKER_CHECK",

|

||||

"label": "check dolphin scheduler worker status",

|

||||

"description": "",

|

||||

"interval":10,

|

||||

"scope": "HOST",

|

||||

"enabled": true,

|

||||

"source": {

|

||||

"type": "SCRIPT",

|

||||

"path": "DOLPHIN/1.2.1/package/alerts/alert_dolphin_scheduler_status.py",

|

||||

"parameters": [

|

||||

|

||||

{

|

||||

"name": "connection.timeout",

|

||||

"display_name": "Connection Timeout",

|

||||

"value": 5.0,

|

||||

"type": "NUMERIC",

|

||||

"description": "The maximum time before this alert is considered to be CRITICAL",

|

||||

"units": "seconds",

|

||||

"threshold": "CRITICAL"

|

||||

},

|

||||

{

|

||||

"name": "alertName",

|

||||

"display_name": "alertName",

|

||||

"value": "DOLPHIN_WORKER",

|

||||

"type": "STRING",

|

||||

"description": "alert name"

|

||||

}

|

||||

]

|

||||

}

|

||||

}

|

||||

],

|

||||

"DOLPHIN_ALERT": [

|

||||

{

|

||||

"name": "DOLPHIN_DOLPHIN_ALERT_CHECK",

|

||||

"label": "check dolphin scheduler alert status",

|

||||

"description": "",

|

||||

"interval":10,

|

||||

"scope": "HOST",

|

||||

"enabled": true,

|

||||

"source": {

|

||||

"type": "SCRIPT",

|

||||

"path": "DOLPHIN/1.2.1/package/alerts/alert_dolphin_scheduler_status.py",

|

||||

"parameters": [

|

||||

|

||||

{

|

||||

"name": "connection.timeout",

|

||||

"display_name": "Connection Timeout",

|

||||

"value": 5.0,

|

||||

"type": "NUMERIC",

|

||||

"description": "The maximum time before this alert is considered to be CRITICAL",

|

||||

"units": "seconds",

|

||||

"threshold": "CRITICAL"

|

||||

},

|

||||

{

|

||||

"name": "alertName",

|

||||

"display_name": "alertName",

|

||||

"value": "DOLPHIN_ALERT",

|

||||

"type": "STRING",

|

||||

"description": "alert name"

|

||||

}

|

||||

]

|

||||

}

|

||||

}

|

||||

]

|

||||

}

|

||||

}

|

||||

|

|

@ -0,0 +1,144 @@

|

|||

<!--

|

||||

~ Licensed to the Apache Software Foundation (ASF) under one or more

|

||||

~ contributor license agreements. See the NOTICE file distributed with

|

||||

~ this work for additional information regarding copyright ownership.

|

||||

~ The ASF licenses this file to You under the Apache License, Version 2.0

|

||||

~ (the "License"); you may not use this file except in compliance with

|

||||

~ the License. You may obtain a copy of the License at

|

||||

~

|

||||

~ http://www.apache.org/licenses/LICENSE-2.0

|

||||

~

|

||||

~ Unless required by applicable law or agreed to in writing, software

|

||||

~ distributed under the License is distributed on an "AS IS" BASIS,

|

||||

~ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

~ See the License for the specific language governing permissions and

|

||||

~ limitations under the License.

|

||||

-->

|

||||

<configuration>

|

||||

<property>

|

||||

<name>alert.type</name>

|

||||

<value>EMAIL</value>

|

||||

<description>alert type is EMAIL/SMS</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mail.protocol</name>

|

||||

<value>SMTP</value>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mail.server.host</name>

|

||||

<value>xxx.xxx.com</value>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mail.server.port</name>

|

||||

<value>25</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mail.sender</name>

|

||||

<value>admin</value>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mail.user</name>

|

||||

<value>admin</value>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mail.passwd</name>

|

||||

<value>000000</value>

|

||||

<description></description>

|

||||

<property-type>PASSWORD</property-type>

|

||||

<value-attributes>

|

||||

<type>password</type>

|

||||

</value-attributes>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

|

||||

<property>

|

||||

<name>mail.smtp.starttls.enable</name>

|

||||

<value>true</value>

|

||||

<value-attributes>

|

||||

<type>boolean</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mail.smtp.ssl.enable</name>

|

||||

<value>true</value>

|

||||

<value-attributes>

|

||||

<type>boolean</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mail.smtp.ssl.trust</name>

|

||||

<value>xxx.xxx.com</value>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

|

||||

<property>

|

||||

<name>xls.file.path</name>

|

||||

<value>/tmp/xls</value>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

|

||||

<property>

|

||||

<name>enterprise.wechat.enable</name>

|

||||

<value>false</value>

|

||||

<description></description>

|

||||

<value-attributes>

|

||||

<type>value-list</type>

|

||||

<entries>

|

||||

<entry>

|

||||

<value>true</value>

|

||||

<label>Enabled</label>

|

||||

</entry>

|

||||

<entry>

|

||||

<value>false</value>

|

||||

<label>Disabled</label>

|

||||

</entry>

|

||||

</entries>

|

||||

<selection-cardinality>1</selection-cardinality>

|

||||

</value-attributes>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>enterprise.wechat.corp.id</name>

|

||||

<value>wechatId</value>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>enterprise.wechat.secret</name>

|

||||

<value>secret</value>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>enterprise.wechat.agent.id</name>

|

||||

<value>agentId</value>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>enterprise.wechat.users</name>

|

||||

<value>wechatUsers</value>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

</configuration>

|

||||

|

|

@ -0,0 +1,71 @@

|

|||

<!--

|

||||

~ Licensed to the Apache Software Foundation (ASF) under one or more

|

||||

~ contributor license agreements. See the NOTICE file distributed with

|

||||

~ this work for additional information regarding copyright ownership.

|

||||

~ The ASF licenses this file to You under the Apache License, Version 2.0

|

||||

~ (the "License"); you may not use this file except in compliance with

|

||||

~ the License. You may obtain a copy of the License at

|

||||

~

|

||||

~ http://www.apache.org/licenses/LICENSE-2.0

|

||||

~

|

||||

~ Unless required by applicable law or agreed to in writing, software

|

||||

~ distributed under the License is distributed on an "AS IS" BASIS,

|

||||

~ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

~ See the License for the specific language governing permissions and

|

||||

~ limitations under the License.

|

||||

-->

|

||||

<configuration>

|

||||

<property>

|

||||

<name>server.port</name>

|

||||

<value>12345</value>

|

||||

<description>

|

||||

server port

|

||||

</description>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

</property>

|

||||

<property>

|

||||

<name>server.servlet.session.timeout</name>

|

||||

<value>7200</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description>

|

||||

</description>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.servlet.multipart.max-file-size</name>

|

||||

<value>1024</value>

|

||||

<value-attributes>

|

||||

<unit>MB</unit>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description>

|

||||

</description>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.servlet.multipart.max-request-size</name>

|

||||

<value>1024</value>

|

||||

<value-attributes>

|

||||

<unit>MB</unit>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description>

|

||||

</description>

|

||||

</property>

|

||||

<property>

|

||||

<name>server.jetty.max-http-post-size</name>

|

||||

<value>5000000</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description>

|

||||

</description>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.messages.encoding</name>

|

||||

<value>UTF-8</value>

|

||||

<description></description>

|

||||

</property>

|

||||

</configuration>

|

||||

|

|

@ -0,0 +1,467 @@

|

|||

<!--

|

||||

~ Licensed to the Apache Software Foundation (ASF) under one or more

|

||||

~ contributor license agreements. See the NOTICE file distributed with

|

||||

~ this work for additional information regarding copyright ownership.

|

||||

~ The ASF licenses this file to You under the Apache License, Version 2.0

|

||||

~ (the "License"); you may not use this file except in compliance with

|

||||

~ the License. You may obtain a copy of the License at

|

||||

~

|

||||

~ http://www.apache.org/licenses/LICENSE-2.0

|

||||

~

|

||||

~ Unless required by applicable law or agreed to in writing, software

|

||||

~ distributed under the License is distributed on an "AS IS" BASIS,

|

||||

~ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

~ See the License for the specific language governing permissions and

|

||||

~ limitations under the License.

|

||||

-->

|

||||

<configuration>

|

||||

<property>

|

||||

<name>spring.datasource.initialSize</name>

|

||||

<value>5</value>

|

||||

<description>

|

||||

Init connection number

|

||||

</description>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.datasource.minIdle</name>

|

||||

<value>5</value>

|

||||

<description>

|

||||

Min connection number

|

||||

</description>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.datasource.maxActive</name>

|

||||

<value>50</value>

|

||||

<description>

|

||||

Max connection number

|

||||

</description>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.datasource.maxWait</name>

|

||||

<value>60000</value>

|

||||

<description>

|

||||

Max wait time for get a connection in milliseconds.

|

||||

If configuring maxWait, fair locks are enabled by default and concurrency efficiency decreases.

|

||||

If necessary, unfair locks can be used by configuring the useUnfairLock attribute to true.

|

||||

</description>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.datasource.timeBetweenEvictionRunsMillis</name>

|

||||

<value>60000</value>

|

||||

<description>

|

||||

Milliseconds for check to close free connections

|

||||

</description>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.datasource.timeBetweenConnectErrorMillis</name>

|

||||

<value>60000</value>

|

||||

<description>

|

||||

The Destroy thread detects the connection interval and closes the physical connection in milliseconds

|

||||

if the connection idle time is greater than or equal to minEvictableIdleTimeMillis.

|

||||

</description>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.datasource.minEvictableIdleTimeMillis</name>

|

||||

<value>300000</value>

|

||||

<description>

|

||||

The longest time a connection remains idle without being evicted, in milliseconds

|

||||

</description>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.datasource.validationQuery</name>

|

||||

<value>SELECT 1</value>

|

||||

<description>

|

||||

The SQL used to check whether the connection is valid requires a query statement.

|

||||

If validation Query is null, testOnBorrow, testOnReturn, and testWhileIdle will not work.

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.datasource.validationQueryTimeout</name>

|

||||

<value>3</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description>

|

||||

Check whether the connection is valid for timeout, in seconds

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.datasource.testWhileIdle</name>

|

||||

<value>true</value>

|

||||

<value-attributes>

|

||||

<type>boolean</type>

|

||||

</value-attributes>

|

||||

<description>

|

||||

When applying for a connection,

|

||||

if it is detected that the connection is idle longer than time Between Eviction Runs Millis,

|

||||

validation Query is performed to check whether the connection is valid

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.datasource.testOnBorrow</name>

|

||||

<value>true</value>

|

||||

<value-attributes>

|

||||

<type>boolean</type>

|

||||

</value-attributes>

|

||||

<description>

|

||||

Execute validation to check if the connection is valid when applying for a connection

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.datasource.testOnReturn</name>

|

||||

<value>false</value>

|

||||

<value-attributes>

|

||||

<type>boolean</type>

|

||||

</value-attributes>

|

||||

<description>

|

||||

Execute validation to check if the connection is valid when the connection is returned

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.datasource.defaultAutoCommit</name>

|

||||

<value>true</value>

|

||||

<value-attributes>

|

||||

<type>boolean</type>

|

||||

</value-attributes>

|

||||

<description>

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.datasource.keepAlive</name>

|

||||

<value>false</value>

|

||||

<value-attributes>

|

||||

<type>boolean</type>

|

||||

</value-attributes>

|

||||

<description>

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

|

||||

<property>

|

||||

<name>spring.datasource.poolPreparedStatements</name>

|

||||

<value>true</value>

|

||||

<value-attributes>

|

||||

<type>boolean</type>

|

||||

</value-attributes>

|

||||

<description>

|

||||

Open PSCache, specify count PSCache for every connection

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.datasource.maxPoolPreparedStatementPerConnectionSize</name>

|

||||

<value>20</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.datasource.spring.datasource.filters</name>

|

||||

<value>stat,wall,log4j</value>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>spring.datasource.connectionProperties</name>

|

||||

<value>druid.stat.mergeSql=true;druid.stat.slowSqlMillis=5000</value>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

|

||||

<property>

|

||||

<name>mybatis-plus.mapper-locations</name>

|

||||

<value>classpath*:/org.apache.dolphinscheduler.dao.mapper/*.xml</value>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mybatis-plus.typeEnumsPackage</name>

|

||||

<value>org.apache.dolphinscheduler.*.enums</value>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mybatis-plus.typeAliasesPackage</name>

|

||||

<value>org.apache.dolphinscheduler.dao.entity</value>

|

||||

<description>

|

||||

Entity scan, where multiple packages are separated by a comma or semicolon

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mybatis-plus.global-config.db-config.id-type</name>

|

||||

<value>AUTO</value>

|

||||

<value-attributes>

|

||||

<type>value-list</type>

|

||||

<entries>

|

||||

<entry>

|

||||

<value>AUTO</value>

|

||||

<label>AUTO</label>

|

||||

</entry>

|

||||

<entry>

|

||||

<value>INPUT</value>

|

||||

<label>INPUT</label>

|

||||

</entry>

|

||||

<entry>

|

||||

<value>ID_WORKER</value>

|

||||

<label>ID_WORKER</label>

|

||||

</entry>

|

||||

<entry>

|

||||

<value>UUID</value>

|

||||

<label>UUID</label>

|

||||

</entry>

|

||||

</entries>

|

||||

<selection-cardinality>1</selection-cardinality>

|

||||

</value-attributes>

|

||||

<description>

|

||||

Primary key type AUTO:" database ID AUTO ",

|

||||

INPUT:" user INPUT ID",

|

||||

ID_WORKER:" global unique ID (numeric type unique ID)",

|

||||

UUID:" global unique ID UUID";

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mybatis-plus.global-config.db-config.field-strategy</name>

|

||||

<value>NOT_NULL</value>

|

||||

<value-attributes>

|

||||

<type>value-list</type>

|

||||

<entries>

|

||||

<entry>

|

||||

<value>IGNORED</value>

|

||||

<label>IGNORED</label>

|

||||

</entry>

|

||||

<entry>

|

||||

<value>NOT_NULL</value>

|

||||

<label>NOT_NULL</label>

|

||||

</entry>

|

||||

<entry>

|

||||

<value>NOT_EMPTY</value>

|

||||

<label>NOT_EMPTY</label>

|

||||

</entry>

|

||||

</entries>

|

||||

<selection-cardinality>1</selection-cardinality>

|

||||

</value-attributes>

|

||||

<description>

|

||||

Field policy IGNORED:" ignore judgment ",

|

||||

NOT_NULL:" not NULL judgment "),

|

||||

NOT_EMPTY:" not NULL judgment"

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mybatis-plus.global-config.db-config.column-underline</name>

|

||||

<value>true</value>

|

||||

<value-attributes>

|

||||

<type>boolean</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mybatis-plus.global-config.db-config.logic-delete-value</name>

|

||||

<value>1</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mybatis-plus.global-config.db-config.logic-not-delete-value</name>

|

||||

<value>0</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mybatis-plus.global-config.db-config.banner</name>

|

||||

<value>true</value>

|

||||

<value-attributes>

|

||||

<type>boolean</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

|

||||

<property>

|

||||

<name>mybatis-plus.configuration.map-underscore-to-camel-case</name>

|

||||

<value>true</value>

|

||||

<value-attributes>

|

||||

<type>boolean</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mybatis-plus.configuration.cache-enabled</name>

|

||||

<value>false</value>

|

||||

<value-attributes>

|

||||

<type>boolean</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mybatis-plus.configuration.call-setters-on-nulls</name>

|

||||

<value>true</value>

|

||||

<value-attributes>

|

||||

<type>boolean</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>mybatis-plus.configuration.jdbc-type-for-null</name>

|

||||

<value>null</value>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>master.exec.threads</name>

|

||||

<value>100</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>master.exec.task.num</name>

|

||||

<value>20</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>master.heartbeat.interval</name>

|

||||

<value>10</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>master.task.commit.retryTimes</name>

|

||||

<value>5</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>master.task.commit.interval</name>

|

||||

<value>1000</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>master.max.cpuload.avg</name>

|

||||

<value>100</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>master.reserved.memory</name>

|

||||

<value>0.1</value>

|

||||

<value-attributes>

|

||||

<type>float</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>worker.exec.threads</name>

|

||||

<value>100</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>worker.heartbeat.interval</name>

|

||||

<value>10</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>worker.fetch.task.num</name>

|

||||

<value>3</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>worker.max.cpuload.avg</name>

|

||||

<value>100</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>worker.reserved.memory</name>

|

||||

<value>0.1</value>

|

||||

<value-attributes>

|

||||

<type>float</type>

|

||||

</value-attributes>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

|

||||

</configuration>

|

||||

|

|

@ -0,0 +1,232 @@

|

|||

<!--

|

||||

~ Licensed to the Apache Software Foundation (ASF) under one or more

|

||||

~ contributor license agreements. See the NOTICE file distributed with

|

||||

~ this work for additional information regarding copyright ownership.

|

||||

~ The ASF licenses this file to You under the Apache License, Version 2.0

|

||||

~ (the "License"); you may not use this file except in compliance with

|

||||

~ the License. You may obtain a copy of the License at

|

||||

~

|

||||

~ http://www.apache.org/licenses/LICENSE-2.0

|

||||

~

|

||||

~ Unless required by applicable law or agreed to in writing, software

|

||||

~ distributed under the License is distributed on an "AS IS" BASIS,

|

||||

~ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

~ See the License for the specific language governing permissions and

|

||||

~ limitations under the License.

|

||||

-->

|

||||

<configuration>

|

||||

<property>

|

||||

<name>dolphinscheduler.queue.impl</name>

|

||||

<value>zookeeper</value>

|

||||

<description>

|

||||

Task queue implementation, default "zookeeper"

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>zookeeper.dolphinscheduler.root</name>

|

||||

<value>/dolphinscheduler</value>

|

||||

<description>

|

||||

dolphinscheduler root directory

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>zookeeper.session.timeout</name>

|

||||

<value>300</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description>

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>zookeeper.connection.timeout</name>

|

||||

<value>300</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description>

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>zookeeper.retry.base.sleep</name>

|

||||

<value>100</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description>

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>zookeeper.retry.max.sleep</name>

|

||||

<value>30000</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description>

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>zookeeper.retry.maxtime</name>

|

||||

<value>5</value>

|

||||

<value-attributes>

|

||||

<type>int</type>

|

||||

</value-attributes>

|

||||

<description>

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

|

||||

<property>

|

||||

<name>res.upload.startup.type</name>

|

||||

<display-name>Choose Resource Upload Startup Type</display-name>

|

||||

<description>

|

||||

Resource upload startup type : HDFS,S3,NONE

|

||||

</description>

|

||||

<value>NONE</value>

|

||||

<value-attributes>

|

||||

<type>value-list</type>

|

||||

<entries>

|

||||

<entry>

|

||||

<value>HDFS</value>

|

||||

<label>HDFS</label>

|

||||

</entry>

|

||||

<entry>

|

||||

<value>S3</value>

|

||||

<label>S3</label>

|

||||

</entry>

|

||||

<entry>

|

||||

<value>NONE</value>

|

||||

<label>NONE</label>

|

||||

</entry>

|

||||

</entries>

|

||||

<selection-cardinality>1</selection-cardinality>

|

||||

</value-attributes>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>hdfs.root.user</name>

|

||||

<value>hdfs</value>

|

||||

<description>

|

||||

Users who have permission to create directories under the HDFS root path

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>data.store2hdfs.basepath</name>

|

||||

<value>/dolphinscheduler</value>

|

||||

<description>

|

||||

Data base dir, resource file will store to this hadoop hdfs path, self configuration,

|

||||

please make sure the directory exists on hdfs and have read write permissions。

|

||||

"/dolphinscheduler" is recommended

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>data.basedir.path</name>

|

||||

<value>/tmp/dolphinscheduler</value>

|

||||

<description>

|

||||

User data directory path, self configuration,

|

||||

please make sure the directory exists and have read write permissions

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>hadoop.security.authentication.startup.state</name>

|

||||

<value>false</value>

|

||||

<value-attributes>

|

||||

<type>value-list</type>

|

||||

<entries>

|

||||

<entry>

|

||||

<value>true</value>

|

||||

<label>Enabled</label>

|

||||

</entry>

|

||||

<entry>

|

||||

<value>false</value>

|

||||

<label>Disabled</label>

|

||||

</entry>

|

||||

</entries>

|

||||

<selection-cardinality>1</selection-cardinality>

|

||||

</value-attributes>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>java.security.krb5.conf.path</name>

|

||||

<value>/opt/krb5.conf</value>

|

||||

<description>

|

||||

java.security.krb5.conf path

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>login.user.keytab.username</name>

|

||||

<value>hdfs-mycluster@ESZ.COM</value>

|

||||

<description>

|

||||

LoginUserFromKeytab user

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>login.user.keytab.path</name>

|

||||

<value>/opt/hdfs.headless.keytab</value>

|

||||

<description>

|

||||

LoginUserFromKeytab path

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>resource.view.suffixs</name>

|

||||

<value>txt,log,sh,conf,cfg,py,java,sql,hql,xml,properties</value>

|

||||

<description></description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>fs.defaultFS</name>

|

||||

<value>hdfs://mycluster:8020</value>

|

||||

<description>

|

||||

HA or single namenode,

|

||||

If namenode ha needs to copy core-site.xml and hdfs-site.xml to the conf directory,

|

||||

support s3,for example : s3a://dolphinscheduler

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>fs.s3a.endpoint</name>

|

||||

<value>http://host:9010</value>

|

||||

<description>

|

||||

s3 need,s3 endpoint

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>fs.s3a.access.key</name>

|

||||

<value>A3DXS30FO22544RE</value>

|

||||

<description>

|

||||

s3 need,s3 access key

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>fs.s3a.secret.key</name>

|

||||

<value>OloCLq3n+8+sdPHUhJ21XrSxTC+JK</value>

|

||||

<description>

|

||||

s3 need,s3 secret key

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

<property>

|

||||

<name>loggerserver.rpc.port</name>

|

||||

<value>50051</value>

|

||||

<value-attributes>

|

||||

<type>int</type>F

|

||||

</value-attributes>

|

||||

<description>

|

||||

</description>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

</configuration>

|

||||

|

|

@ -0,0 +1,123 @@

|

|||

<!--

|

||||

~ Licensed to the Apache Software Foundation (ASF) under one or more

|

||||

~ contributor license agreements. See the NOTICE file distributed with

|

||||

~ this work for additional information regarding copyright ownership.

|

||||

~ The ASF licenses this file to You under the Apache License, Version 2.0

|

||||

~ (the "License"); you may not use this file except in compliance with

|

||||

~ the License. You may obtain a copy of the License at

|

||||

~

|

||||

~ http://www.apache.org/licenses/LICENSE-2.0

|

||||

~

|

||||

~ Unless required by applicable law or agreed to in writing, software

|

||||

~ distributed under the License is distributed on an "AS IS" BASIS,

|

||||

~ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

~ See the License for the specific language governing permissions and

|

||||

~ limitations under the License.

|

||||

-->

|

||||

<configuration>

|

||||

<property>

|

||||

<name>dolphin.database.type</name>

|

||||

<value>mysql</value>

|

||||

<description>Dolphin Scheduler DataBase Type Which Is Select</description>

|

||||

<display-name>Dolphin Database Type</display-name>

|

||||

<value-attributes>

|

||||

<type>value-list</type>

|

||||

<entries>

|

||||

<entry>

|

||||

<value>mysql</value>

|

||||

<label>Mysql</label>

|

||||

</entry>

|

||||

<entry>

|

||||

<value>postgresql</value>

|

||||

<label>Postgresql</label>

|

||||

</entry>

|

||||

</entries>

|

||||

<selection-cardinality>1</selection-cardinality>

|

||||

</value-attributes>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

|

||||

<property>

|

||||

<name>dolphin.database.host</name>

|

||||

<value></value>

|

||||

<display-name>Dolphin Database Host</display-name>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

|

||||

<property>

|

||||

<name>dolphin.database.port</name>

|

||||

<value></value>

|

||||

<display-name>Dolphin Database Port</display-name>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

|

||||

<property>

|

||||

<name>dolphin.database.username</name>

|

||||

<value></value>

|

||||

<display-name>Dolphin Database Username</display-name>

|

||||

<on-ambari-upgrade add="true"/>

|

||||

</property>

|

||||

|

||||

<property>

|

||||

<name>dolphin.database.password</name>

|

||||

<value></value>

|

||||

<display-name>Dolphin Database Password</display-name>

|

||||

<property-type>PASSWORD</property-type>

|

||||

<value-attributes>

|

||||

<type>password</type>

|

||||

</value-attributes>